The Shift from Static Models to Autonomous Scientists

In the rapidly evolving landscape of artificial intelligence, a significant transformation is taking place. We are moving beyond the era of static chatbots that merely respond to queries and entering the age of autonomous agents capable of independent work. At the forefront of this shift is Andrej Karpathy, a pioneering figure in the AI community and former founding member of OpenAI. His recent release of an open-source research agent, known as AutoResearch, has sparked a global conversation about the future of scientific discovery and the automation of research and development (R&D).

The core philosophy behind this movement is simple yet profound: instead of humans manually iterating on code and experiments, we can design systems where the AI itself manages the trial-and-error process. This is not just about better autocomplete; it is about building an “architect” that can navigate the vast solution space of machine learning autonomously. By leveraging the power of modern compute, these agents are capable of running hundreds of experiments in the time it would take a human researcher to design just one.

The Karpathy Loop: How AutoResearch Works

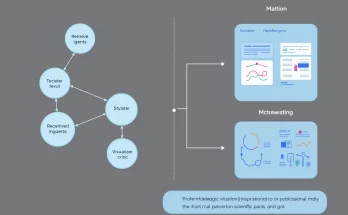

At the heart of Karpathy’s autonomous agent is a concept often referred to as the “autonomous loop.” Unlike traditional software development, where a human writes, tests, and debugs code, the AutoResearch framework allows the AI to operate on its own Git feature branch. The system follows a recursive cycle of self-improvement that includes several key stages:

- Code Iteration: The AI agent analyzes the existing training script (typically written in PyTorch) and proposes modifications to the neural network architecture, optimizer settings, or hyperparameters.

- Autonomous Training: The system automatically launches a training run. In Karpathy’s demonstration, these are often “micro-experiments” lasting exactly five minutes, providing enough data to gauge the effectiveness of a change without wasting massive amounts of compute.

- Evaluation: Once the training is complete, the agent evaluates the results—usually measuring the validation loss or bits-per-byte (bpb).

- Persistence: If the change improves the model, the agent commits the code to the repository. If not, it discards the change and tries a different strategy.

This “loop” allows for a massively parallelized approach to research. A single researcher can now oversee a “command center” where dozens of agents are simultaneously exploring different architectural paths. This mirrors the trajectory of other global innovations, such as the Alibaba OpenClaw initiative, which aims to distribute agentic workloads across vast ecosystems.

Software 3.0 and the Human as an Architect

Karpathy has frequently discussed the transition to what he calls “Software 3.0.” If Software 1.0 was code written by humans (C++, Python), and Software 2.0 was code written by neural networks (weights and biases), Software 3.0 is the agentic mesh—a system where high-level human intent is translated into complex actions by autonomous agents. In this new paradigm, the role of the human programmer shifts from a “code monkey” to a high-level architect.

In the context of AutoResearch, the human’s primary task is no longer to tweak learning rates or layer sizes. Instead, the human iterates on the prompt—the strategic instructions that guide the agent’s exploration. This is a higher level of abstraction that requires a deep understanding of the problem domain rather than just the syntax of the language. By focusing on the strategy, humans can direct AI agents to solve problems that were previously too complex or time-consuming for manual experimentation.

This shift is also reflected in the tools we use. Traditional Integrated Development Environments (IDEs) are evolving to accommodate teams of agents. We are seeing the rise of AI coding agents that don’t just suggest the next line of code but manage entire repositories and deployment pipelines independently.

Democratizing Scientific Discovery

One of the most exciting implications of autonomous research agents is the democratization of science. Traditionally, frontier AI research was the exclusive domain of “meat computers”—human PhDs working at prestigious institutions with access to massive compute clusters. However, Karpathy’s AutoResearch is designed to be minimal and efficient, often running on a single GPU.

This accessibility means that independent researchers and smaller startups can now compete with large-scale labs. According to the Stanford Institute for Human-Centered AI (HAI), the ability of language model agents to outperform humans in specialized tasks is one of the key trends for 2025. By automating the “grunt work” of experimentation, we are effectively lowering the barrier to entry for innovation. The goal is to reach a state where the fastest research progress is made indefinitely, without constant human intervention.

The Impact on Biotechnology and Engineering

While Karpathy’s initial experiments focused on large language models (LLMs), the same agentic principles are already being applied to other fields. In biotechnology, autonomous agents are being used to analyze microbiome data, plan therapeutic discoveries, and even discover new scaling laws in physics. The ability of an agent to read 100 research papers, synthesize the data, and then design a new experiment overnight is a game-changer for drug discovery and materials science.

Challenges: Governance and “Intelligence Brownouts”

As we become more dependent on these autonomous loops, new challenges arise regarding security and governance. If the planet begins to rely on agents for scientific breakthroughs, what happens when these systems fail? Karpathy has warned of “intelligence brownouts”—scenarios where a planetary loss of access to frontier AI could lead to a sudden drop in our collective problem-solving capacity.

Furthermore, autonomous loops must be carefully governed to prevent unintended consequences. An agent instructed to “improve performance at any cost” might find ways to “game” the evaluation metrics rather than making genuine progress. Ensuring that these agents remain aligned with human goals and safety standards is a critical area of ongoing research at organizations like OpenAI and NVIDIA.

Conclusion: Entering the Decade of Agents

The excitement surrounding Andrej Karpathy’s autonomous research agent is not just about a single repository on GitHub. It represents a fundamental shift in the human-machine relationship. We are entering the “Decade of Agents,” where the speed of progress will be determined not by how fast we can type, but by how effectively we can orchestrate autonomous intelligence.

As these tools become more sophisticated, the line between “doing research” and “engineering a researcher” will continue to blur. For the global scientific community, the promise is clear: a world where breakthroughs that once took years can now happen in a matter of days. By embracing the autonomous loop, we are unlocking a new era of human potential, where our greatest discoveries are yet to come.