The Next Frontier in Intelligent Software Development

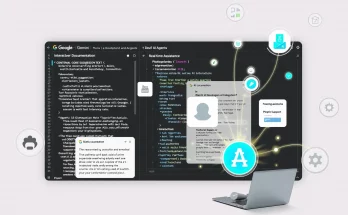

OpenAI has officially begun the limited rollout of GPT-5.2 Codex-Max, a specialized iteration of its latest flagship model series designed specifically for the rigors of professional software engineering. While earlier models like GPT-4o and the initial GPT-5 releases laid the groundwork for AI-assisted programming, Codex-Max represents a fundamental shift toward agentic coding. This means the AI is no longer just a snippet generator; it is becoming an active collaborator capable of managing complex, multi-file workflows.

The release comes at a time when competition in the AI space is fiercer than ever. As Zhipu AI challenges OpenAI with its own advanced agents, the tech giant is leaning heavily into its “Codex-Max” architecture to maintain its dominance among developers and enterprise teams. The model is currently being tested by select OpenAI Plus and Team subscribers, with a broader enterprise rollout expected throughout the first quarter of 2026.

Key Features of the GPT-5.2 Codex-Max Architecture

Unlike standard LLMs that focus on general-purpose chat, GPT-5.2 Codex-Max is fine-tuned for the structural and logical requirements of high-level programming. According to early technical documentation, the model features several key upgrades:

- Long-Horizon Reasoning: Codex-Max can plan and execute coding tasks that require dozens of sequential steps, such as refactoring an entire microservice or migrating a legacy codebase.

- Repository-Level Awareness: The model utilizes an expanded context window and proprietary indexing methods to “understand” an entire project directory, rather than just the file currently open in an editor.

- Defensive Cybersecurity: In addition to writing code, the model is trained to identify vulnerabilities in real-time and suggest patches before the code is even committed to a repository.

- Agentic Tool Use: Codex-Max can autonomously run tests, interpret terminal output, and adjust its code based on compiler errors without human intervention.

Enhanced Debugging and Feature Implementation

One of the most notable improvements in GPT-5.2 is its ability to handle “unseen” bugs. While previous models relied heavily on pattern matching from their training data, GPT-5.2 utilizes a new “Thinking” architecture to simulate code execution pathways. This allows it to debug production-level code more reliably, identifying logical fallacies that simple syntax checkers often miss. This move aligns with OpenAI’s NextGenAI vision, which prioritizes deep reasoning over mere information retrieval.

Performance Benchmarks: Codex-Max vs. The Field

Initial benchmarks suggest that GPT-5.2 Codex-Max is outperforming its predecessors by a significant margin. On the SWE-bench (Software Engineering Benchmark), the model demonstrated a 40% improvement in resolving real-world GitHub issues compared to GPT-4.1. This leap is attributed to its improved ability to navigate large-scale dependencies and its reduced “hallucination” rate when dealing with specific API calls.

However, the AI landscape remains a “three-way tie” at the top. Competitors like Google’s Gemini 3.0 Pro and Anthropic’s Claude 4.5 are also pushing the boundaries of autonomous coding. While Codex-Max leads in agentic task completion, some developers have noted that Gemini still holds a slight edge in raw processing speed for massive codebases, particularly when integrated via Google Cloud infrastructure.

Challenges and Community Feedback

Despite the technical brilliance of the new model, the rollout has not been without its hurdles. Early adopters on developer forums have reported instances of performance degradation during peak usage hours. Some users noted that the “Thinking” process—while accurate—can lead to longer response times, occasionally taking several minutes to plan a complex refactoring task. This has led to a debate within the community: is accuracy more important than the near-instantaneous feedback loop that developers have grown accustomed to?

There are also reports of “agentic loops,” where the AI becomes stuck in a repetitive cycle of fixing and breaking the same piece of logic. OpenAI has acknowledged these teething issues and is expected to release a series of patches (likely GPT-5.2.1) to address stability and latency. Pricing also remains a significant barrier for smaller teams, with the GPT-5.2 Pro tier reaching rates of $168.00 per 1 million output tokens, reflecting the massive compute power required to sustain these advanced models.

The Shift Toward an Audio-First and Multi-Modal Future

Perhaps the most intriguing aspect of the GPT-5.2 rollout is its integration into OpenAI’s broader strategy. Reports suggest that the company is shifting toward an “audio-first” future, where developers can speak complex instructions to their IDE and watch as Codex-Max executes them in the background. This transition aims to break the traditional reliance on screen-based interactions, allowing for a more fluid, natural language-driven development environment.

This “voice-to-code” capability is being developed in tandem with hardware partnerships, possibly aimed at reducing the influence of existing ecosystems from companies like Apple and Google. By creating a direct, AI-mediated interface for software creation, OpenAI is positioning itself as the primary operating system for the next generation of digital builders.

Looking Ahead: What GPT-5.2 Means for Developers

The arrival of GPT-5.2 Codex-Max signals that we are entering an era where the role of the software engineer is shifting from “writer” to “editor” and “architect.” As these models handle the boilerplate, syntax, and routine debugging, human developers will be free to focus on high-level system design and creative problem-solving. While the current rollout is limited, the impact of Codex-Max will likely be felt across the entire tech industry as it sets a new standard for what AI agents can achieve in professional environments.

For those looking to leverage these tools, the key will be mastering agent orchestration—learning how to direct an autonomous AI to navigate a codebase safely and effectively. As hardware continues to evolve with more powerful chips from NVIDIA, the latency issues currently plaguing early rollouts are expected to vanish, paving the way for a truly seamless AI-human coding partnership.